In this projects datasets are image stacks off of a brightfield microscopes. That part is always the same. Training datasets though

additionally come with corresponding skeletons, as *.swc files. In the context of model training, the SWC skeletons are the labels for

the data, which is the micrographs.

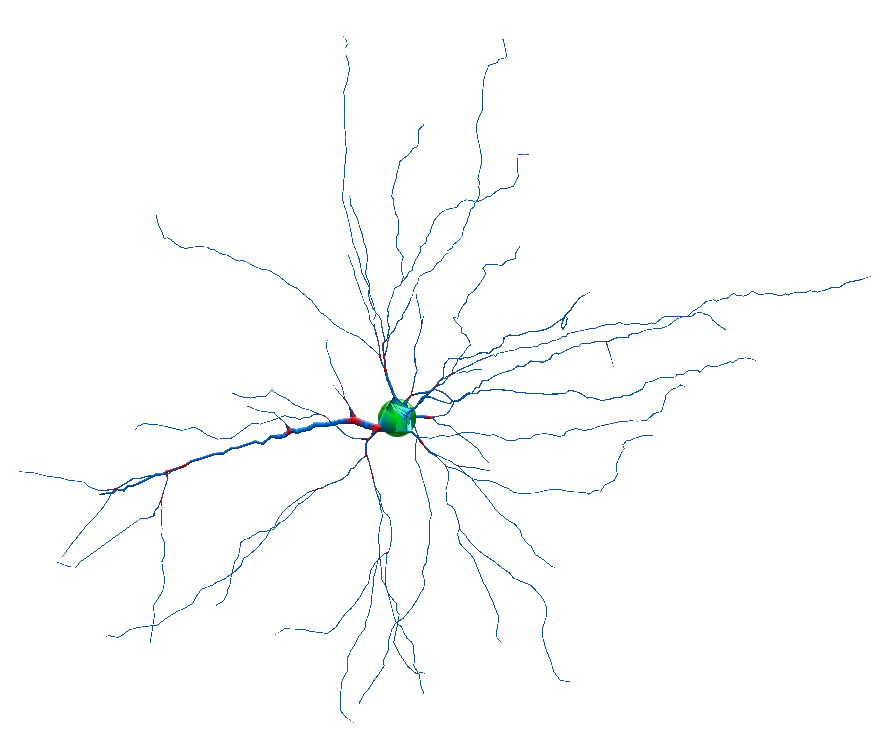

Example SWC of Janelia's Sharkviewer

Sample datasets have been exercised from:

- Allen Institute

- ShuTu

The goal it to also enable folks to upload (or link to) their own brightfield image stacks and on Colab generate SWC files (read: the inference phase of the neuron detector model's lifecycle). Here lies all manner of lame ETL data wrangling to be done around weak data standards.

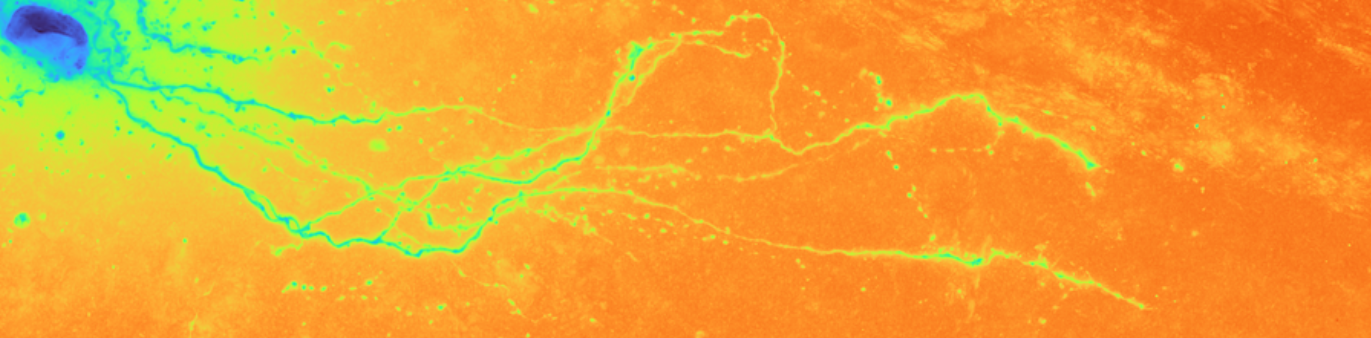

The images might be rectilinear as in the case of data from the Allen Institute. Or the images might be the result of stitching which is not rectilinear. (The Allen's data is stitched, but it is also rectilinear as can be seen by subtle lighting banding artifacts in some images.) The following virtual slide stitching image was produced by ShuTu.